You Spend A Lot on Survey Data. Waste 40 Hours Matching It Manually

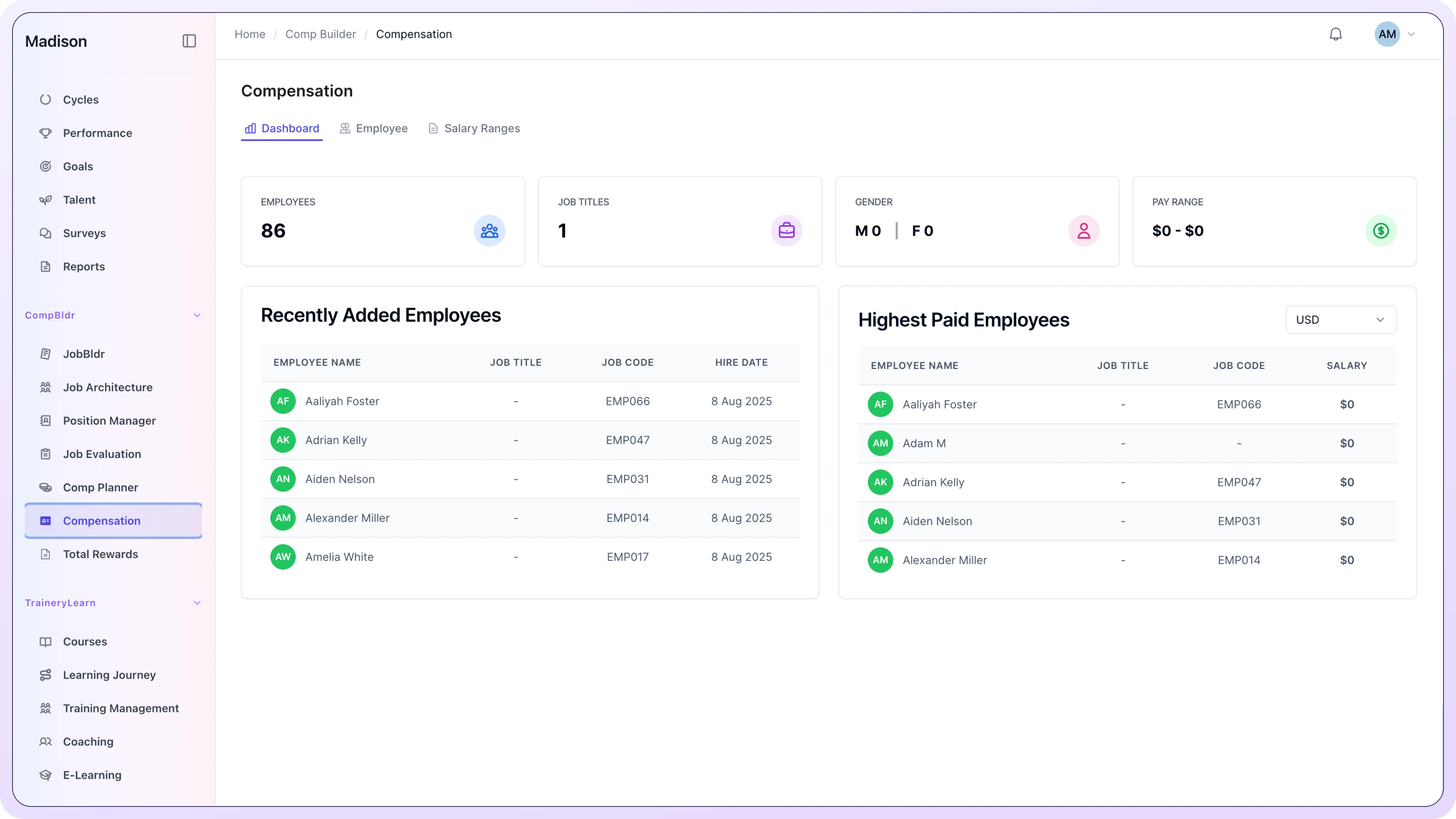

CompBldr can map Radford, Mercer, WTW, salary.com and other survey data directly to your internal survey data store. Additionally, BLS salary data are available for all clients. No spreadsheets.

.svg)

.svg)

.svg)

Used by Comp Analysts and Total Rewards teams who have stopped rebuilding survey matches from scratch every cycle.

54%

of organizations say their salary ranges are out of date or not aligned to current market conditions

2.4x

higher voluntary turnover risk when compensation is perceived as below-market in critical roles

67%

of pay equity issues are linked to inconsistent or title-based role matching against market survey data

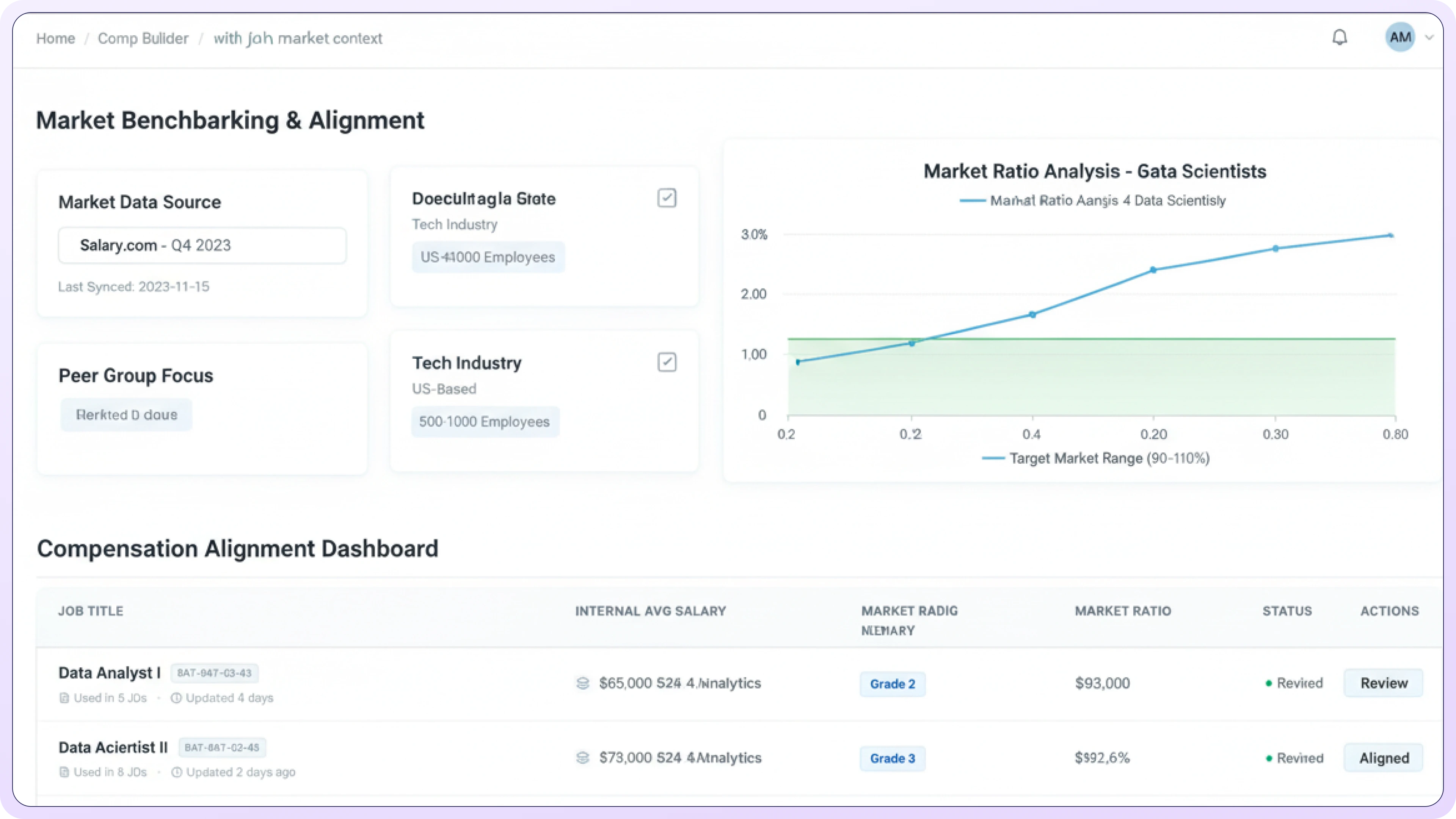

How Benchmarking Works When It's Built on Architecture

Because your job architecture already lives inside CompBldr, survey matching doesn't require a spreadsheet. It runs automatically, and the results are reproducible, versioned, and auditable.

.svg)

Architecture-Linked Matching

Because your job architecture lives inside CompBldr, survey jobs map directly to your internal roles. No spreadsheet, no guesswork, no analyst weeks.

Multi-Survey Integration

Radford, Mercer, WTW, salary.com and other survey data directly to your internal survey data store. See your competitive position across multiple data sources in one view.

.svg)

Percentile Analysis

Instantly see where every role sits against 25th, 50th, 75th, and 90th percentile benchmarks. Configure positioning by family, level, or geography.

Salary Band Generation

Market data feeds directly into salary band creation. Bands are both market-anchored and internally consistent, not a compromise between the two.

Stop Rebuilding the Survey Match From Scratch. CompBldr Keeps It Done

Here's what the benchmarking workflow looks like when it's built on your job architecture instead of a spreadsheet.

Blend Up to Six Data Sources. With Confidence Scoring.

Six compensation survey data sources blended natively with configurable weighting by job family. Confidence scores surface where coverage is thin or match quality is low, before they influence outputs.

AI Maps Survey Titles to Your Roles. You Validate the Outliers.

AI-assisted matching uses your job architecture context family, grade, and scope not just title keywords. More accurate matches with fewer manual corrections, every cycle.

Build Regression-Based Salary Structures. Without Exporting to Excel.

CompBldr builds salary structures directly from benchmarking data using regression analysis. Every structure is versioned, reproducible, and connected to your job architecture no spreadsheet rebuild required each cycle.

About Compensation Benchmarking

Software and Pay Band Development

CompBldr can blend multiple compensation data sources. We can also custom-build API integrations for additional survey data. In addition, you can upload Excel-based survey data for your job titles at any time using our dynamic upload capabilities.

A confidence score (0-1000) measures the statistical reliability of a blended market figure. It reflects the number of active sources, the match quality of each comparator, and the combined sample size behind the blended number. High-confidence data (green) is reliable for pay range decisions. Low-confidence data is flagged for review before use.

The Map Survey Title modal uses ML-based matching to rank survey titles for each organizational position based on the full job description, scope, and level, not just the title. Results are rated Confident, Strong, or Partial. Multiple titles from multiple sources can be selected in one session with individual effective dates before confirming the mapping.

Match quality badges rate how closely a survey title aligns with an organizational position. Exact (green) means direct alignment. Strong (blue) means closely related with minor scope differences. Partial (yellow) means overlapping but with meaningful scope variation. Match quality affects how each comparator is weighted in the blended market calculation for that position.

An aging factor adjusts survey compensation data for the time elapsed since the inclusion date. Older survey data understates current market pay. When the Aging Factor toggle is enabled, CompBldr automatically recalculates Adj PayRate for every comparator based on its inclusion date, no manual per-row entry required.

Eight pre-built reports are included: MB-EX010 Market Comparison Report, MB-EX013 Market Comparison Summary, MB-EX011 Market Average Pay, MB-EX020 Pay Ranges and Pay Grades By Position, MB-EX016 Salary Budget, MB-EX001 Comparative Market Analysis (Staff), MB-EX002 Proposed Grade and Range Structure, and MB-EX003 Potential Costs to Implement Salary Ranges. Four analytical graphs are also included.

Finalize Reports locks the current benchmarking data into a versioned, auditable package. The package captures the source configuration, aging factor settings, match quality assignments, and all eight reports at the moment of finalization. Prior packages are preserved and retrievable. When a pay decision is questioned, the data behind it is one click away.

MB-EX019 displays side-by-side box plots per pay grade, comparing internal salary ranges (blue) against market data ranges (green). The median line shows the midpoint of each distribution. When the green box extends above the blue box for a given grade, internal ranges are lagging the market at that grade and warrant structural review.

Yes. Each organizational position can carry any number of survey title comparators across any combination of active data sources. In the example, Senior Software Engineer carries six comparators: two from Salary.com and one each from BLS, Radford, Mercer, and WTW. The blended market number weights each comparator by match quality and sample size.

Benchmarking Built on Architecture, Not Guesswork

Accurate competitive pay positioning every cycle with a fraction of the effort.

No credit card · 15-minute walkthrough · Most teams invest $25K–$120K/yr

.svg)

.webp)

.webp)